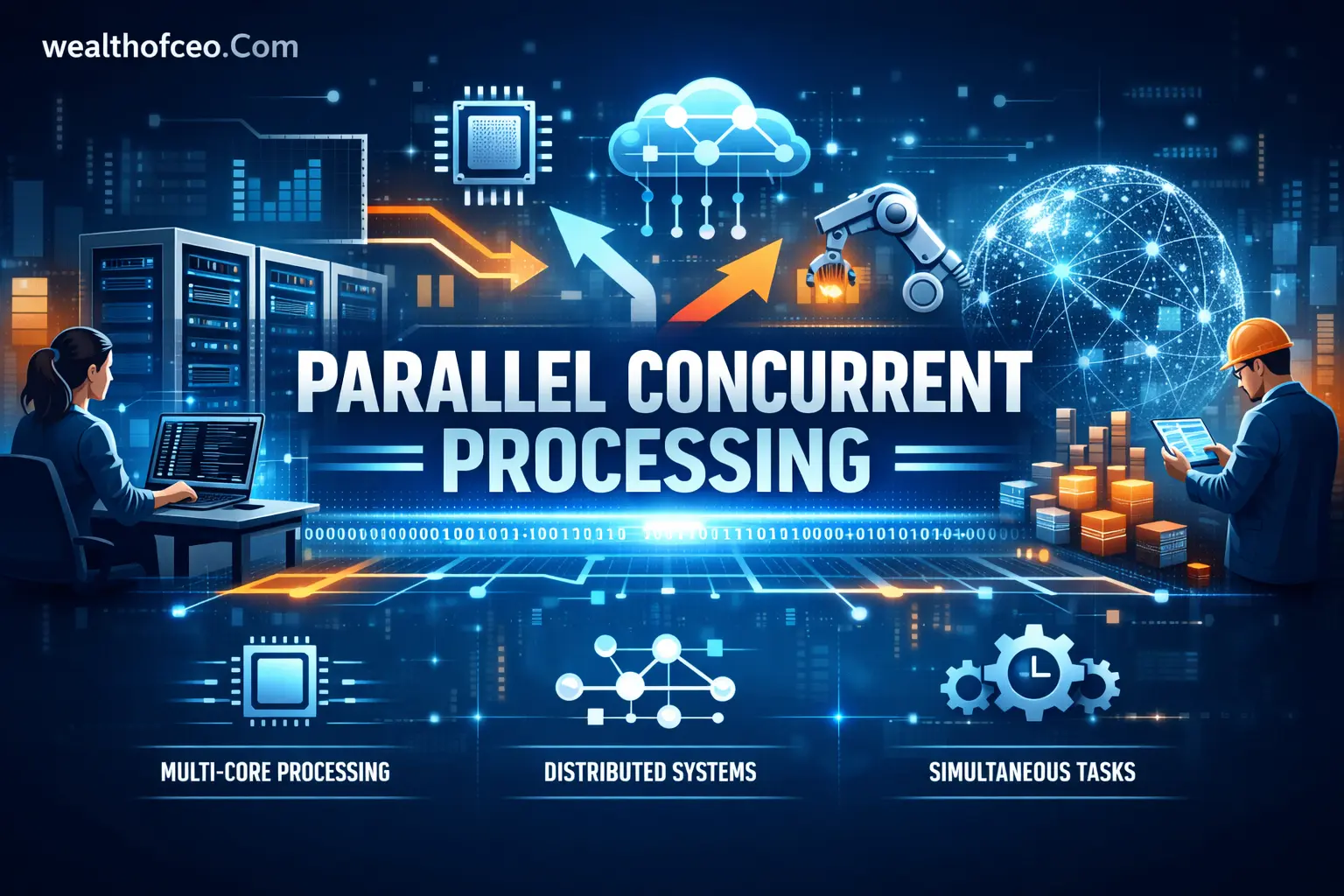

Parallel concurrent processing is a computing approach that allows multiple tasks to make progress at the same time while distributing execution across available hardware resources. It combines structured task management with true simultaneous execution on multi-core processors or distributed systems. This model is widely used in operating systems, enterprise platforms, cloud environments, and high-performance workloads where efficiency and responsiveness are critical.

In modern architectures, parallel concurrent processing enables systems to handle heavy computational tasks while still serving real-time user requests. By dividing work into smaller units and coordinating execution through threads, processes, or distributed nodes, organizations can improve throughput, scalability, and system stability. It has become a foundational design principle for scalable software and infrastructure built for large-scale demand.

What Is Parallel Concurrent Processing?

Parallel concurrent processing is a computing approach where multiple tasks make progress at the same time, and some of them may execute simultaneously on separate hardware resources.

-

Concurrency focuses on managing multiple tasks efficiently.

-

Parallelism focuses on executing tasks at the exact same time.

-

Modern systems combine both to maximize performance and responsiveness.

-

It is foundational in operating systems, cloud platforms, and large-scale applications.

Definition in Modern Computing Context

Parallel concurrent processing means structuring software so multiple tasks run independently, while the system distributes them across available processors or cores.

-

Tasks are divided into smaller units of work.

-

The runtime or OS schedules these units.

-

Multi-core CPUs or distributed nodes execute work simultaneously.

-

The design supports scalability and high throughput.

This model is standard in backend systems, data platforms, and AI workloads.

Difference Between Concurrency and Parallelism

Concurrency is about handling multiple tasks in overlapping time periods. Parallelism is about executing multiple tasks at the same time.

-

Concurrency can exist on a single-core CPU using time slicing.

-

Parallelism requires multiple cores or processors.

-

Concurrency improves responsiveness.

-

Parallelism improves computational speed.

All parallel systems are concurrent, but not all concurrent systems are parallel.

Why the Terms Are Often Confused

The terms are confused because both involve multiple tasks running “at once” from a user perspective.

-

On single-core systems, tasks appear simultaneous due to rapid context switching.

-

On multi-core systems, tasks may actually execute simultaneously.

-

Many frameworks implement both models together.

-

Documentation and marketing materials often use the terms interchangeably.

Clear architectural analysis is required to distinguish them properly.

How Parallel Concurrent Processing Works

Parallel concurrent processing works by dividing workloads into independent tasks and scheduling them across available computing resources.

-

Work is decomposed into smaller units.

-

A scheduler assigns tasks to threads or processes.

-

Execution happens across cores, CPUs, or nodes.

-

Synchronization ensures safe coordination.

The effectiveness depends on workload structure and hardware capacity.

Task Decomposition and Workload Distribution

Task decomposition means breaking a large job into smaller, independent parts.

-

Identify segments that can run independently.

-

Remove unnecessary task dependencies.

-

Define input and output boundaries.

-

Assign tasks to threads or worker processes.

Example: Splitting a large dataset into partitions for simultaneous processing.

Threading vs Multiprocessing Models

Threading uses multiple threads within the same process. Multiprocessing uses separate processes with independent memory spaces.

-

Threads share memory and are lightweight.

-

Processes have isolated memory and stronger fault isolation.

-

Threads are suitable for I/O-bound tasks.

-

Multiprocessing is often better for CPU-intensive tasks.

The choice depends on performance needs and safety requirements.

CPU Cores, Clusters, and Distributed Systems

Execution happens across hardware resources such as cores or distributed nodes.

-

Multi-core CPUs enable true parallel execution.

-

Clusters distribute work across multiple machines.

-

Distributed systems communicate over networks.

-

Cloud platforms dynamically scale compute capacity.

Infrastructure design directly affects scalability and fault tolerance.

Synchronization and Communication Mechanisms

Synchronization ensures tasks coordinate safely without corrupting shared data.

-

Mutexes and locks protect critical sections.

-

Semaphores manage access limits.

-

Message queues enable safe inter-process communication.

-

Atomic operations reduce locking overhead.

Poor synchronization design leads to instability and unpredictable results.

Core Components and Architecture

The architecture consists of execution units, memory structures, scheduling logic, and load balancing mechanisms.

-

Execution units: threads or processes.

-

Memory model: shared or distributed.

-

Scheduler: assigns CPU time.

-

Coordination layer: manages communication.

Each component must align with workload demands.

Process and Thread Management

Process and thread management controls creation, execution, and termination of tasks.

-

Define lifecycle policies.

-

Avoid uncontrolled thread spawning.

-

Set execution priorities.

-

Monitor resource consumption.

Controlled management prevents system overload.

Memory Models (Shared vs Distributed)

Shared memory allows multiple threads to access the same data. Distributed memory keeps data isolated across nodes.

-

Shared memory is faster but requires synchronization.

-

Distributed memory improves fault isolation.

-

Data consistency must be maintained.

-

Network latency impacts distributed performance.

Architecture selection impacts performance and complexity.

Scheduling and Context Switching

Scheduling determines which task runs and for how long.

-

Preemptive scheduling allows interruption.

-

Cooperative scheduling relies on task yielding.

-

Context switching introduces overhead.

-

Fairness policies prevent starvation.

Efficient scheduling improves system stability.

Load Balancing Mechanisms

Load balancing distributes work evenly across available resources.

-

Static balancing assigns tasks upfront.

-

Dynamic balancing adjusts during runtime.

-

Work-stealing improves utilization.

-

Monitoring tools detect imbalances.

Poor distribution leads to idle resources and bottlenecks.

Parallel vs Concurrent Processing: Key Differences

Parallel and concurrent processing differ in execution behavior and hardware reliance.

-

Concurrency manages task overlap.

-

Parallelism executes tasks simultaneously.

-

One improves responsiveness.

-

The other improves computational throughput.

Understanding the difference prevents design errors.

Execution Model Comparison

Execution models define how tasks progress.

-

Concurrent systems interleave tasks.

-

Parallel systems execute tasks at the same time.

-

Hybrid systems combine both.

-

Real-world systems usually adopt hybrid models.

Architectural clarity ensures correct implementation.

Hardware Requirements

Hardware requirements differ significantly.

-

Concurrency can run on single-core systems.

-

Parallelism requires multi-core or multi-CPU setups.

-

GPUs enable massive parallel workloads.

-

Distributed systems require networked infrastructure.

Capacity planning must consider workload type.

Performance Trade-offs

Performance depends on workload characteristics.

-

Parallel systems reduce computation time.

-

Concurrency improves system responsiveness.

-

Synchronization adds overhead.

-

Communication latency reduces efficiency.

Blind parallelization may degrade performance.

When to Use Each Approach

Use concurrency for responsiveness and multitasking. Use parallelism for heavy computation.

-

Web servers benefit from concurrency.

-

Scientific simulations require parallelism.

-

Data pipelines often combine both.

-

System design should match workload behavior.

Choose based on measurable performance needs.

Real-World Use Cases and Industry Applications

Parallel concurrent processing is used wherever scale and responsiveness are critical.

-

Enterprise systems

-

Cloud-native platforms

-

AI workloads

-

Financial transaction systems

It underpins modern digital infrastructure.

High-Performance Computing (HPC)

HPC uses large clusters to solve complex scientific problems.

-

Climate modeling

-

Genomic analysis

-

Physics simulations

-

Engineering computations

These workloads require massive parallel execution.

Cloud and Distributed Systems

Cloud platforms rely on distributed processing for elasticity.

-

Auto-scaling services

-

Distributed storage systems

-

Big data analytics

-

Event-driven architectures

Concurrency ensures responsiveness under load.

Artificial Intelligence and Machine Learning

AI training relies on parallel computation.

-

GPUs process tensors simultaneously.

-

Distributed training splits datasets.

-

Data preprocessing runs concurrently.

-

Inference systems handle multiple requests.

Performance directly impacts training time and cost.

Web Servers and Microservices Architectures

Modern web systems rely heavily on concurrency.

-

Handle thousands of requests simultaneously.

-

Separate services process tasks independently.

-

Asynchronous I/O improves throughput.

-

Container orchestration distributes load.

Reliability depends on correct concurrency design.

Benefits of Parallel Concurrent Processing

The main benefit is improved performance and scalability without sacrificing responsiveness.

-

Higher throughput

-

Better hardware utilization

-

Reduced processing time

-

Improved user experience

It enables large-scale system growth.

Improved Throughput and Performance

Throughput increases when tasks run simultaneously.

-

Divide heavy workloads.

-

Use multi-core processors.

-

Reduce blocking operations.

-

Optimize scheduling.

Performance gains must be measured, not assumed.

Better Resource Utilization

Systems avoid idle CPU cycles.

-

Distribute tasks evenly.

-

Balance memory usage.

-

Prevent resource starvation.

-

Monitor utilization metrics.

Efficiency lowers operational cost.

Scalability in Modern Systems

Scalability means handling growth without redesign.

-

Horizontal scaling adds nodes.

-

Vertical scaling adds CPU or memory.

-

Distributed coordination maintains consistency.

-

Load balancers manage traffic growth.

Scalability planning must be proactive.

Enhanced System Responsiveness

Responsive systems improve user experience.

-

Non-blocking operations reduce wait time.

-

Concurrent request handling avoids bottlenecks.

-

Background processing isolates heavy tasks.

-

Timeouts prevent system freeze.

Responsiveness is critical for service reliability.

Challenges and Technical Risks

Improper implementation introduces serious risks.

-

Data corruption

-

Deadlocks

-

Performance degradation

-

Debugging difficulty

Strong design discipline is required.

Race Conditions and Deadlocks

Race conditions occur when tasks access shared data unsafely. Deadlocks occur when tasks wait indefinitely.

-

Protect shared resources.

-

Use minimal locking.

-

Detect circular wait conditions.

-

Implement timeout safeguards.

These issues can halt production systems.

Synchronization Overhead

Synchronization adds computational cost.

-

Locking reduces parallel efficiency.

-

Excessive coordination slows execution.

-

Fine-grained locks reduce contention.

-

Lock-free designs improve throughput.

Balance safety with performance.

Debugging and Testing Complexity

Concurrent systems are harder to test.

-

Bugs may be intermittent.

-

Timing issues are unpredictable.

-

Reproducing errors is difficult.

-

Stress testing is required.

Comprehensive logging is essential.

Resource Contention Issues

Resource contention occurs when tasks compete for limited resources.

-

CPU contention reduces throughput.

-

Memory pressure increases latency.

-

Disk and network bottlenecks emerge.

-

Thread exhaustion crashes systems.

Capacity planning reduces risk.

Best Practices for Implementation

Effective implementation requires disciplined architecture and controlled execution management.

-

Plan concurrency early.

-

Minimize shared dependencies.

-

Measure performance continuously.

-

Test under realistic loads.

Reactive fixes are costly.

Designing for Scalability from the Start

Scalability must be built into architecture.

-

Design stateless services.

-

Use distributed queues.

-

Avoid centralized bottlenecks.

-

Separate compute and storage layers.

Retrofitting scalability is difficult.

Minimizing Shared State

Reducing shared data lowers synchronization risk.

-

Prefer immutable data structures.

-

Use message passing.

-

Isolate services.

-

Limit global variables.

Less sharing equals fewer conflicts.

Effective Thread and Process Management

Controlled management improves stability.

-

Set thread pool limits.

-

Avoid unbounded concurrency.

-

Monitor thread lifecycle.

-

Handle failures gracefully.

Excessive threads reduce performance.

Performance Monitoring and Optimization

Continuous monitoring ensures stability.

-

Track CPU utilization.

-

Measure latency and throughput.

-

Identify blocking calls.

-

Profile memory consumption.

Optimization must rely on metrics.

Tools, Frameworks, and Technologies

Modern ecosystems provide built-in concurrency support.

-

Languages

-

Runtime libraries

-

Containers

-

Monitoring systems

Tool choice affects maintainability.

Programming Languages with Native Support

Several languages support concurrency and parallelism natively.

-

Go uses goroutines.

-

Java provides thread pools and executors.

-

Python offers multiprocessing and async frameworks.

-

C++ supports multi-threading libraries.

Language selection should match workload demands.

Concurrency Libraries and APIs

Libraries simplify implementation.

-

Thread pools manage execution.

-

Futures and promises handle asynchronous results.

-

Reactive frameworks support event-driven systems.

-

Distributed task queues scale workloads.

Libraries reduce low-level complexity.

Containerization and Orchestration Platforms

Containers enable scalable deployment.

-

Docker isolates workloads.

-

Kubernetes manages scaling.

-

Auto-scaling adjusts resources dynamically.

-

Service meshes manage communication.

Infrastructure must align with concurrency models.

Monitoring and Profiling Tools

Monitoring tools detect bottlenecks.

-

CPU profilers measure hotspots.

-

Distributed tracing identifies latency sources.

-

Log aggregation tracks failures.

-

Performance dashboards provide visibility.

Visibility prevents hidden failures.

Compliance, Security, and Governance Considerations

Concurrency affects data safety and regulatory compliance.

-

Data consistency must be guaranteed.

-

Secure communication channels are required.

-

Audit trails must remain accurate.

-

Enterprise controls must be enforced.

Governance frameworks must reflect system complexity.

Data Integrity and Transaction Safety

Data integrity requires consistent updates.

-

Use atomic transactions.

-

Apply database isolation levels.

-

Implement rollback mechanisms.

-

Validate concurrent updates.

Financial and healthcare systems require strict controls.

Secure Inter-Process Communication

Communication between services must be protected.

-

Encrypt network traffic.

-

Authenticate service endpoints.

-

Validate message formats.

-

Apply least-privilege access policies.

Security failures can expose sensitive data.

Industry Standards and Enterprise Controls

Standards define acceptable practices.

-

Follow ISO security frameworks.

-

Implement access logging.

-

Maintain audit compliance.

-

Conduct periodic risk assessments.

Enterprise governance reduces operational risk.

Common Mistakes to Avoid

Common design errors reduce reliability and performance.

-

Overcomplicating architecture

-

Ignoring hardware constraints

-

Excessive locking

-

Misinterpreting metrics

Disciplined engineering prevents avoidable failures.

Over-Parallelization

More threads do not always mean better performance.

-

Excess context switching reduces efficiency.

-

Synchronization overhead increases.

-

CPU saturation causes instability.

-

Benchmark before scaling.

Parallelism must be measured.

Ignoring Hardware Constraints

Hardware limits define performance boundaries.

-

Core count limits true parallel execution.

-

Memory bandwidth affects speed.

-

Network latency impacts distributed systems.

-

Storage I/O can become a bottleneck.

Design within infrastructure limits.

Poor Synchronization Design

Incorrect synchronization creates instability.

-

Overuse of global locks.

-

Missing atomic operations.

-

Lack of timeout handling.

-

Uncontrolled shared resources.

Design minimal and precise coordination.

Misunderstanding Performance Metrics

Misreading metrics leads to wrong conclusions.

-

High CPU usage is not always bad.

-

Low latency may hide instability.

-

Throughput must be measured under load.

-

Benchmark results require consistent conditions.

Decisions must rely on accurate data.

Implementation Checklist for Engineers and Architects

A structured checklist reduces implementation risk.

-

Assess infrastructure readiness.

-

Define architectural model.

-

Validate through testing.

-

Monitor continuously after deployment.

Documentation must support long-term maintenance.

System Readiness Assessment

Assess whether infrastructure supports concurrent workloads.

-

Verify CPU core availability.

-

Check memory capacity.

-

Evaluate network throughput.

-

Review storage performance.

Gaps must be resolved before deployment.

Architecture Planning Steps

Planning prevents structural flaws.

-

Identify independent tasks.

-

Define communication mechanisms.

-

Select appropriate frameworks.

-

Establish monitoring standards.

Document decisions clearly.

Testing and Validation Criteria

Validation ensures stability.

-

Perform stress testing.

-

Conduct race condition analysis.

-

Validate failover mechanisms.

-

Simulate peak workloads.

Testing must mirror production conditions.

Deployment and Monitoring Checklist

Deployment must include ongoing oversight.

-

Configure auto-scaling.

-

Enable centralized logging.

-

Set performance alerts.

-

Define incident response procedures.

Monitoring continues after launch.

Frequently Asked Questions

What is parallel concurrent processing in simple terms?

Parallel concurrent processing is a computing method where multiple tasks are managed at the same time, and some are executed simultaneously across multiple CPU cores or systems.

Is parallel processing the same as concurrent processing?

No. Concurrent processing manages multiple tasks in overlapping time periods, while parallel processing executes tasks at the exact same time on separate hardware resources.

Where is parallel concurrent processing commonly used?

It is commonly used in cloud computing, high-performance computing (HPC), artificial intelligence workloads, large-scale web servers, and distributed enterprise systems.

What are the main risks of implementing parallel systems?

The main risks include race conditions, deadlocks, synchronization overhead, debugging complexity, and resource contention that can reduce performance or cause instability.

Do small applications need parallel concurrent processing?

Not always. Small or low-traffic applications may perform efficiently with basic concurrency alone, and adding parallelism can introduce unnecessary complexity.